LLMs.txt: What It Is, What It Is Not, and Where It Fits

Gives a balanced view of LLMs.txt and where it should sit in a broader machine-readability strategy.

Practical frameworks to help businesses navigate Answer Engine Optimization and sustainable organic expansion.

98 results

Gives a balanced view of LLMs.txt and where it should sit in a broader machine-readability strategy.

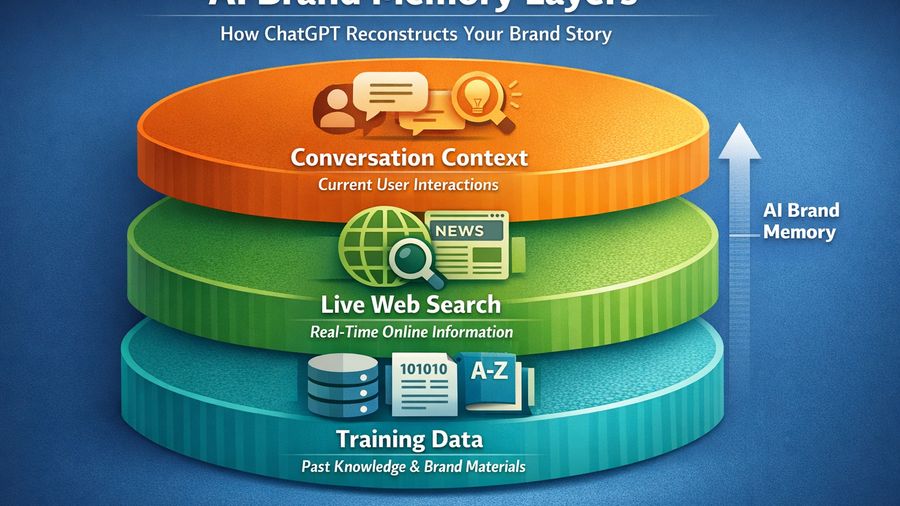

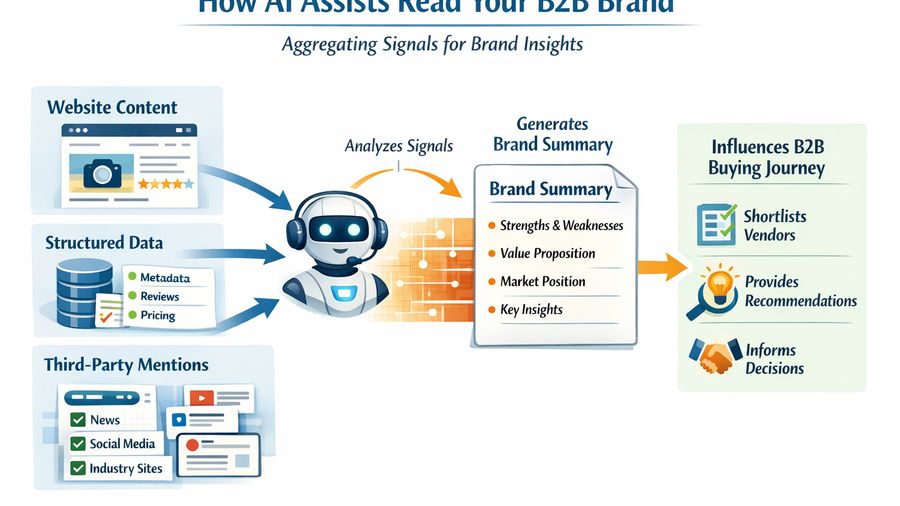

Explains how AI systems use public web signals, repeated mentions, and trustworthy source framing to form brand understanding.

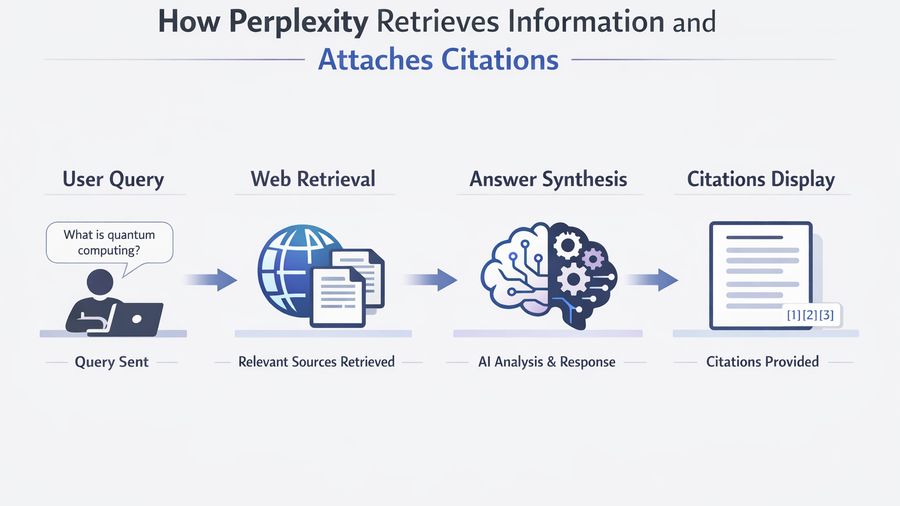

Analyzes the types of pages and evidence patterns that are more likely to be surfaced as citations in AI answers.

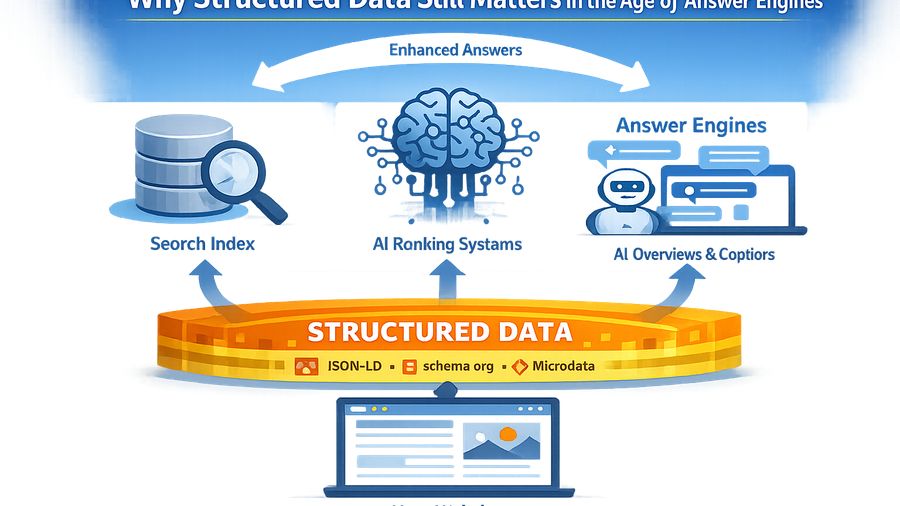

Shows how schema, clean entity language, and evidence-backed copy help machines understand a company with less ambiguity.

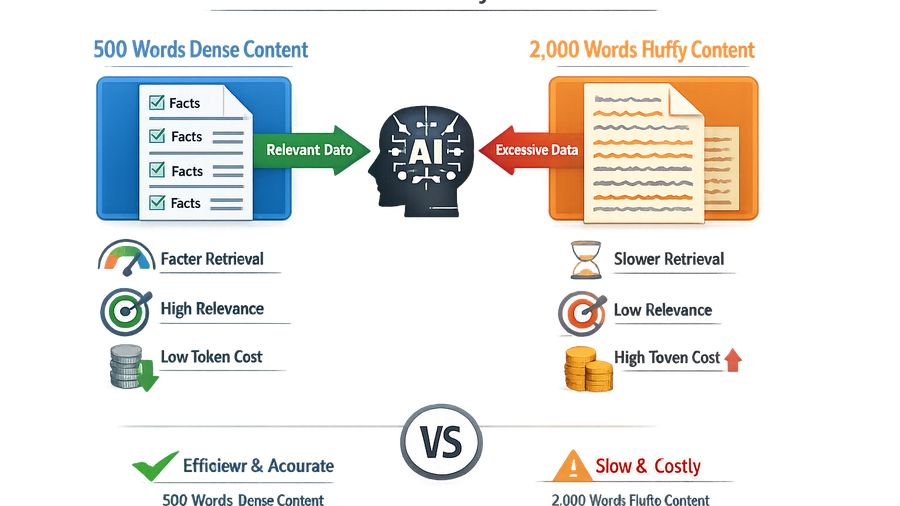

Explains why compressed, information-rich content often performs better for AI retrieval than padded blog posts.

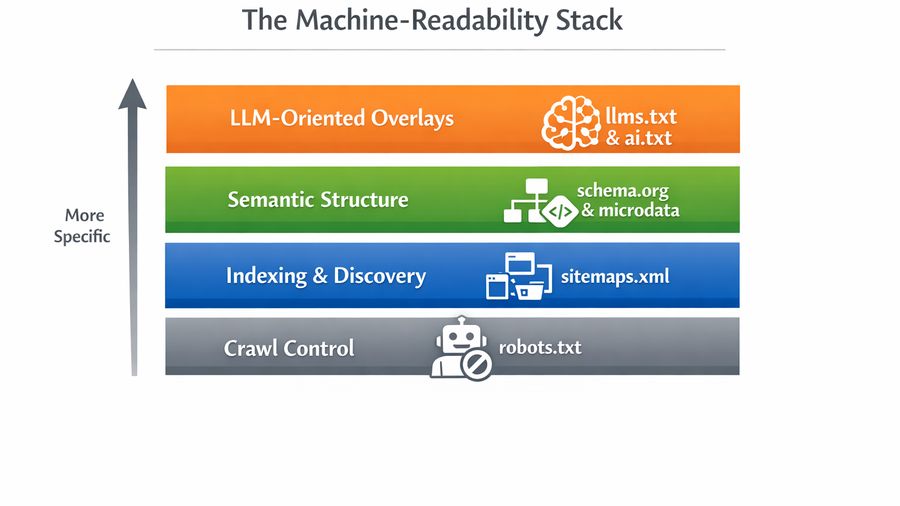

Separates useful structured data implementation from checklist theater and shows which schema patterns support trust and retrieval.

Gives a tactical framework for writing concise, sourceable, retrieval-friendly content that survives summarization.

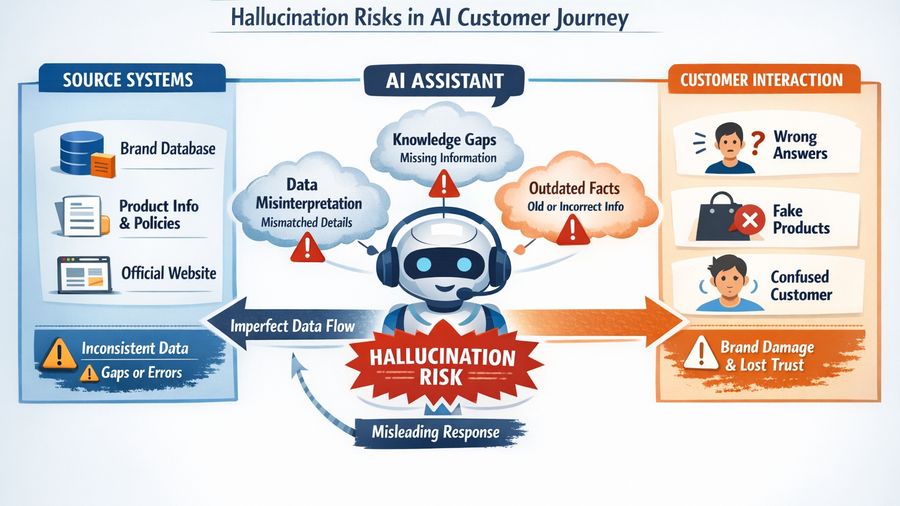

Explains why models misstate brand facts and what content architecture reduces those errors.

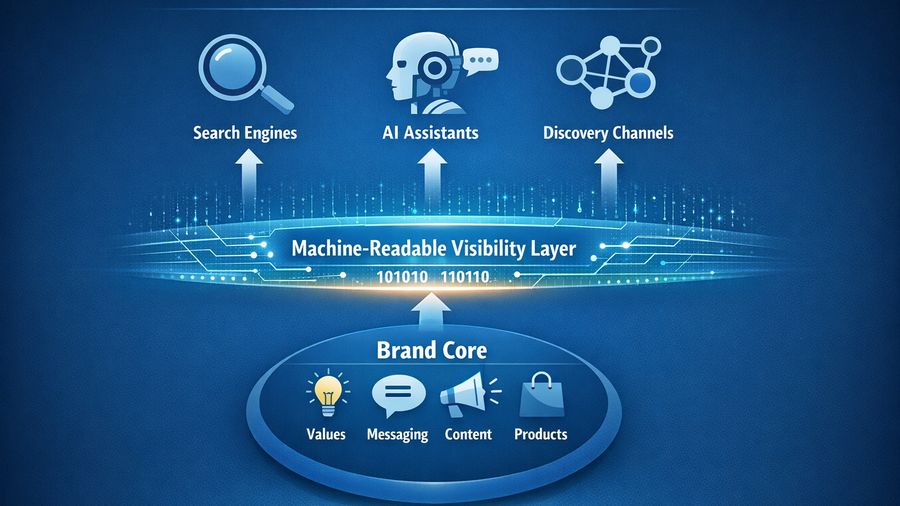

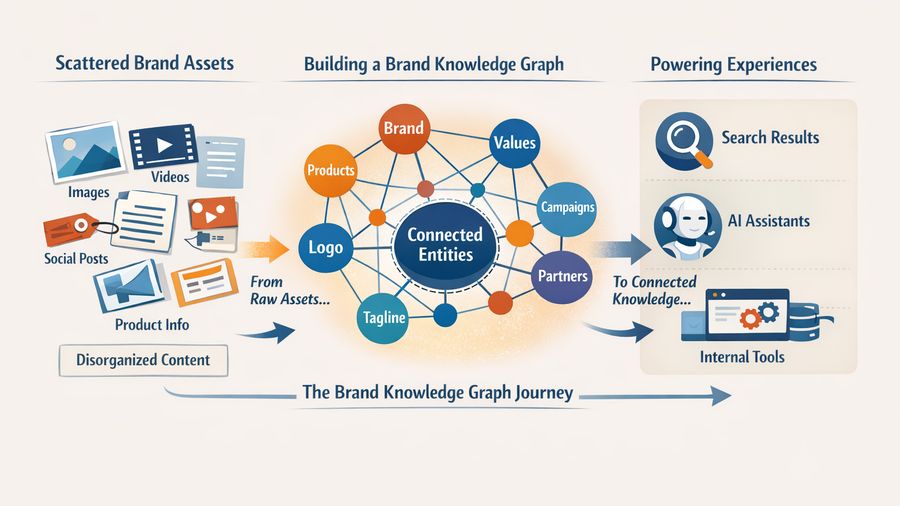

Introduces the idea of a brand knowledge graph and shows how products, founders, claims, and proof points become machine-readable assets.

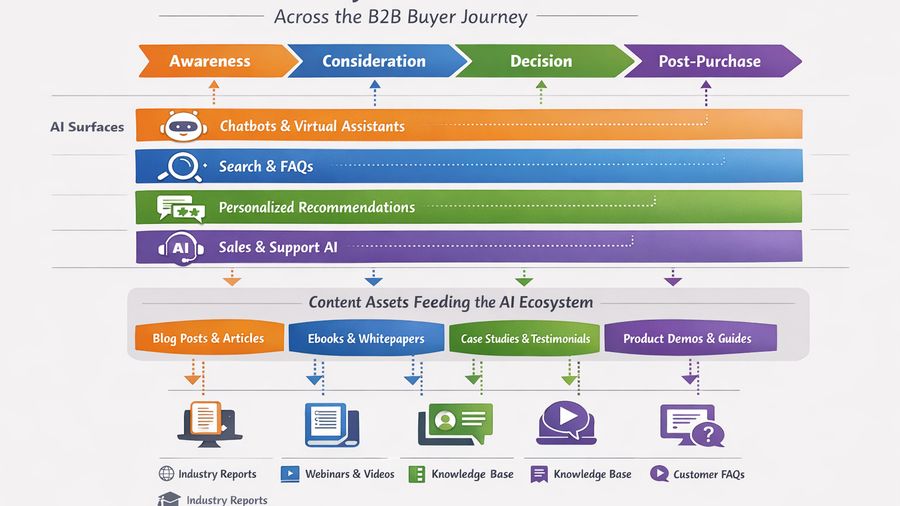

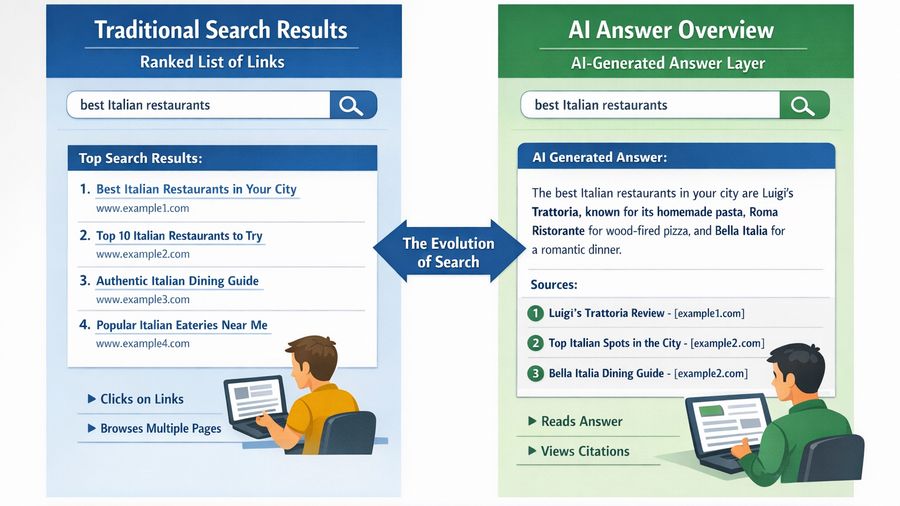

Explains the transition from classic ranking tactics to content systems designed for AI summaries, citations, and recommendation layers.

Breaks down how LLMs interpret a brand across pages, structured data, third-party mentions, and repeated semantic patterns.

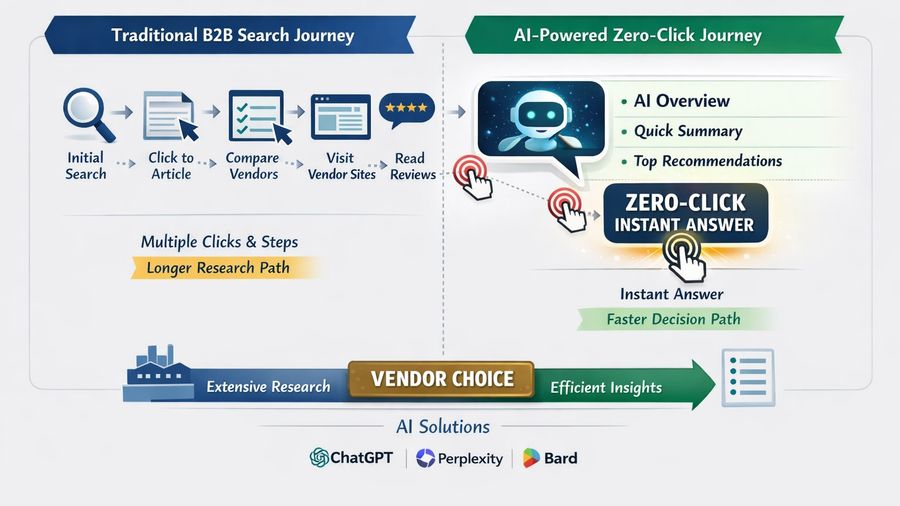

Shows how AI Overviews, ChatGPT, and Perplexity compress journeys into answers, changing how brands should measure organic success.